I wanted to understand how large language models actually work: not by reading papers, but by building one from scratch. So over a weekend, I pair-programmed with Claude and built Slipstream: a 51-million parameter transformer language model trained entirely on Formula 1 Wikipedia articles.

The goal was never to build something production-ready. It was to get my hands dirty with every layer of the stack: tokenization, attention heads, training loops, loss curves. And walk away with real intuition about where these models succeed, where they break, and why scale matters as much as everyone says it does.

The Build

Slipstream evolved through three phases, each one a lesson in what actually moves the needle when training a language model.

Phase 1: Token Forge

I started small. Absurdly small. A 0.8M parameter model trained on 6KB of Shakespeare using character-level tokenization. The kind of thing you build just to prove the plumbing works: data loading, the transformer architecture, the training loop, text generation. It worked. Barely. But it proved out the foundation.

Phase 2: Pivoting to F1

Shakespeare was a fine test bed, but I wanted a domain I actually cared about. I scraped 408 Formula 1 Wikipedia articles, roughly 10MB of text, and made three critical upgrades:

- BPE tokenization via tiktoken (GPT-2 compatible, 50,257 token vocabulary)

- Scaled architecture: 8 layers, 8 attention heads, embedding dimension of 512

- ~2.5 million training tokens from the scraped corpus

The switch from character-level to BPE tokenization was the single biggest quality improvement across the entire project. It's the difference between the model seeing individual letters and seeing meaningful word pieces. Everything downstream got better.

Phase 3: Training

Training ran for 3.65 hours on a MacBook Pro M5 Pro, pushing about 10,000 tokens per second through the Apple Silicon GPU via PyTorch's MPS backend. The model hit its best validation loss of 4.12 at step 2,000, and then started overfitting. Hard. By step 8,000, training loss had cratered to 0.26 while validation loss climbed steadily.

This wasn't a surprise. The data-to-parameter ratio told the whole story.

The Ratio Problem

This is the lesson that hit hardest. Slipstream has 51 million parameters trained on 2.5 million tokens, a data-to-parameter ratio of roughly 0.05x. The recommended ratio for healthy training is around 20x. I was off by a factor of 400.

51 million parameters sounds like a lot until you realize GPT-4 has roughly 1.8 trillion. And even GPT-4 was trained on orders of magnitude more data relative to its parameter count. Scale isn't just about making the model bigger. It's about keeping data and parameters in balance. Without enough data, the model simply memorizes instead of learning general patterns.

What It Can (and Can't) Do

Slipstream generates text that looks like Formula 1 content. It knows the shape of the domain: team names, circuits, championship structures, the cadence of racing prose. But it gets nearly every fact wrong. It'll confidently tell you about races that never happened, attribute wins to the wrong drivers, and invent plausible-sounding statistics.

I also tried fine-tuning on 423 hand-crafted Q&A pairs. The result: about 24% accuracy (12 out of 49 correct answers). Enough to show the approach has potential, nowhere near enough to be useful. More data, more parameters, more training time. The usual prescription.

The Stack

| Framework | PyTorch |

| Tokenizer | BPE via tiktoken (GPT-2 compatible) |

| Hardware | Apple Silicon GPU (MPS backend) |

| Architecture | 8 layers, 8 heads, embed_dim=512 |

| Parameters | 51,197,440 |

| Training data | 408 F1 Wikipedia articles (~10MB) |

| Training time | 3.65 hours |

What I Took Away

Building Slipstream didn't teach me how to build a production LLM. It taught me something more useful: intuition for why things work the way they do at scale. When I read about training runs, scaling laws, and data pipeline decisions now, I'm not just parsing abstractions. I've felt the failure modes firsthand.

A few specific takeaways:

- Tokenization is foundational. BPE over character-level tokenization was a bigger win than doubling the model size.

- Overfitting is inevitable without enough data. No amount of architectural cleverness compensates for a 0.05x data ratio.

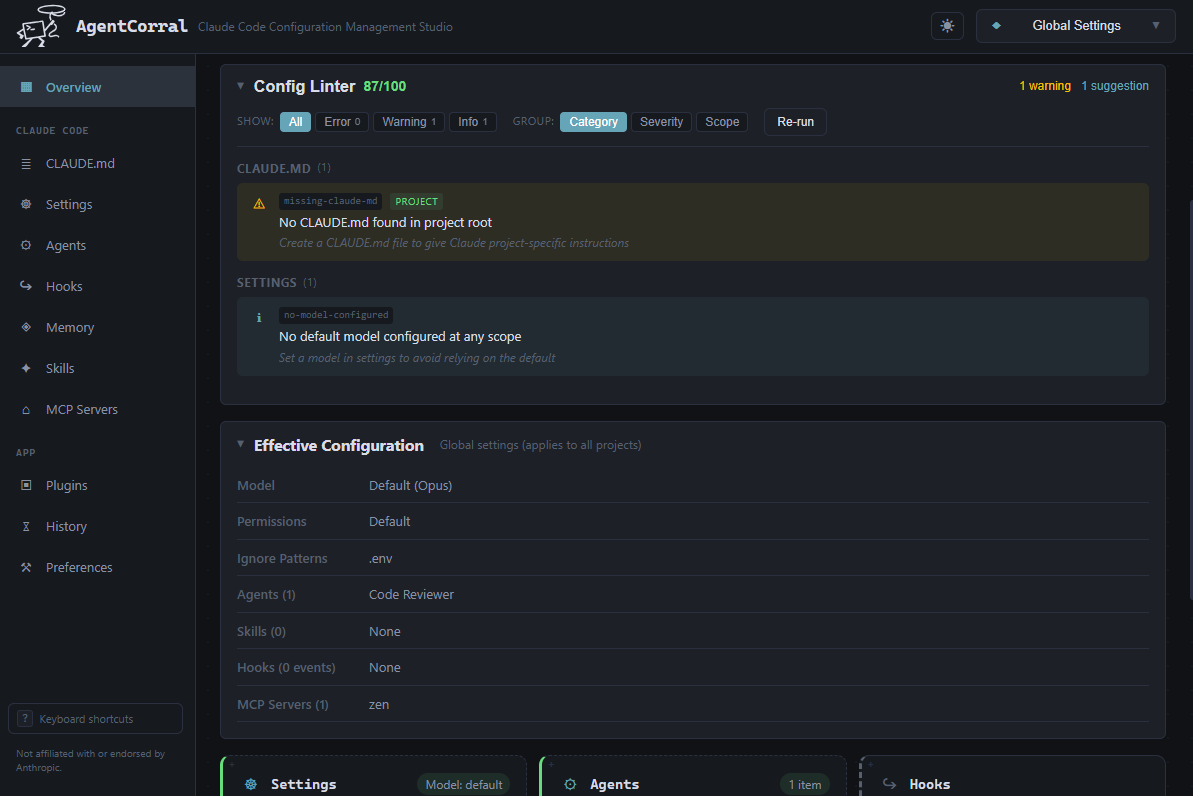

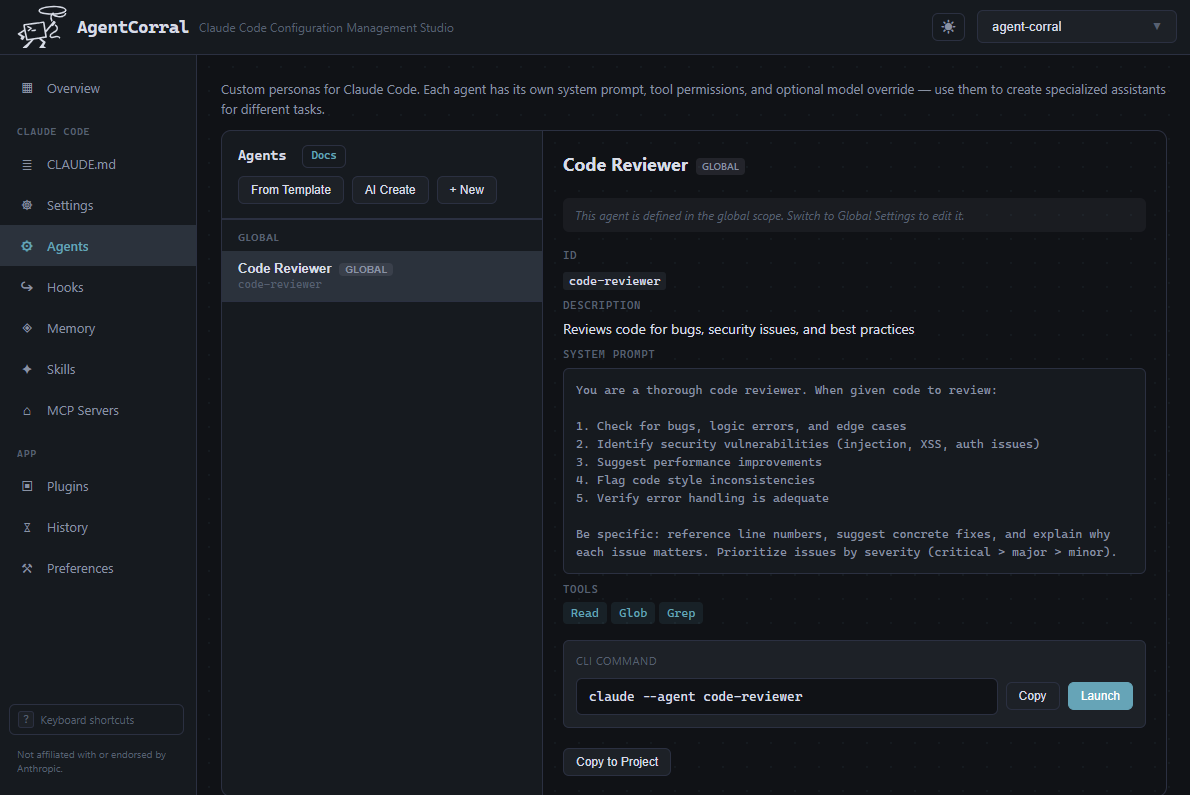

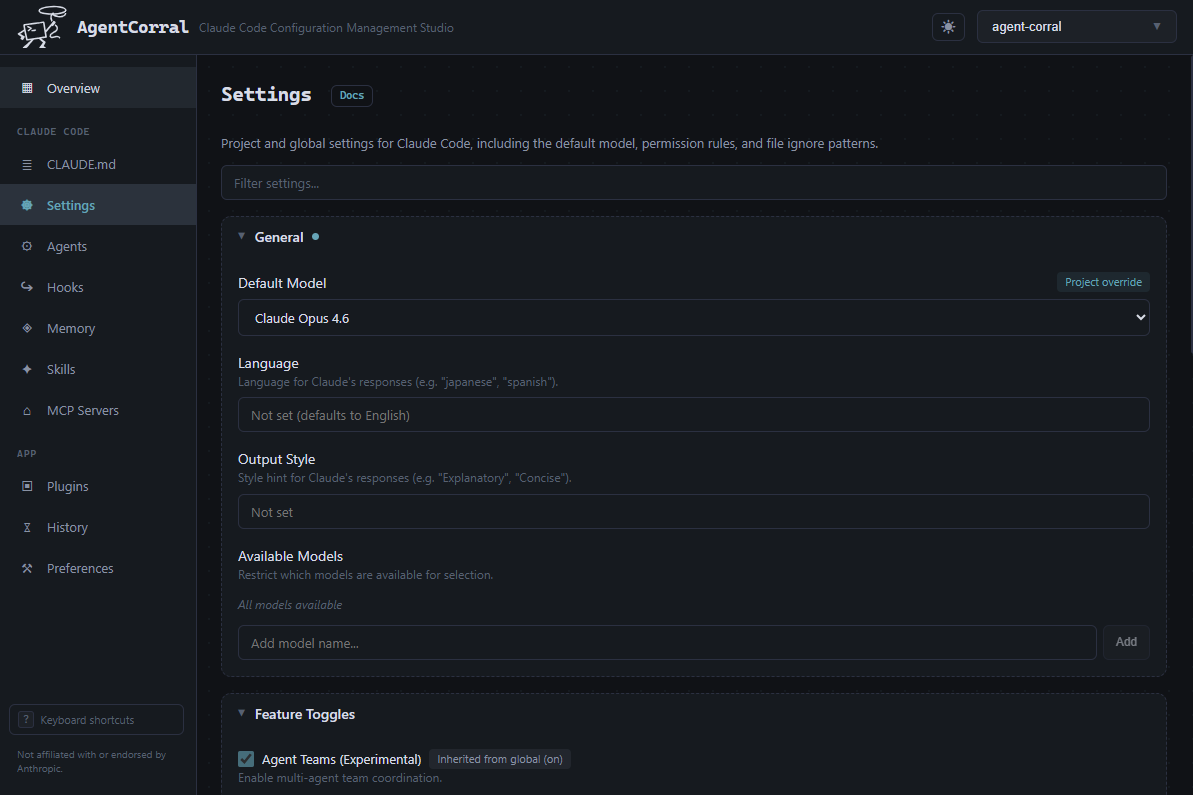

- Pair-programming with AI accelerates everything. Claude helped me move through the entire project in a weekend: debugging CUDA/MPS issues, iterating on architecture decisions, writing evaluation harnesses. The model itself is modest; the velocity of building it was not.

- Small models are great teachers. You can't inspect GPT-4's attention patterns or watch its loss curves in real time. With 51M parameters, everything is visible, everything is debuggable, and the feedback loops are fast.

The full project is open source: github.com/llrowat/slipstream