Since around December 2025, I've handed off basically all code writing to Claude Code. It isn't an experiment or a productivity hack I'm trying out, it's just how I work now. The code gets written, I don't write it.

Nobody can credibly predict what software development looks like long term, since AI is moving fast enough that anything past a year or two is a guessing game. But I don't need to predict the future. The shift already happened, faster than I expected, and it changed what the job actually is. The developers who thrive aren't the ones who write code, they're the ones who think most clearly about what needs to be built and why.

Requirements Are the Job Now

This is the highest-leverage thing most developers underinvest in. When AI generates a working implementation from a well-written spec, the spec is the work.

Defining business goals precisely. Translating vague stakeholder intent into actual system behavior. Specifying edge cases, failure modes, performance expectations. None of that is new work, but it used to be a step you rushed through to get to writing code. Now it's the whole job.

The developer who can take an ambiguous ask and produce a clear, testable description of what "done" looks like is going to be far more useful than the one who bangs out a feature in a weekend but can't explain what it's supposed to do.

Judgment Still Needs a Human

The temptation with AI-assisted development is to move fast and skip the thinking. You can generate a working implementation in minutes, but "working" and "correct under all conditions" are very different things.

What happens when this service goes down? What data are we exposing? What regulations apply? What's the blast radius of a bad deploy? These questions matter more, not less, when the cost of generating an implementation drops to near zero. Anyone can ship a feature. Not everyone can tell you what breaks when it fails, what it costs to maintain, or what it exposes to an attacker.

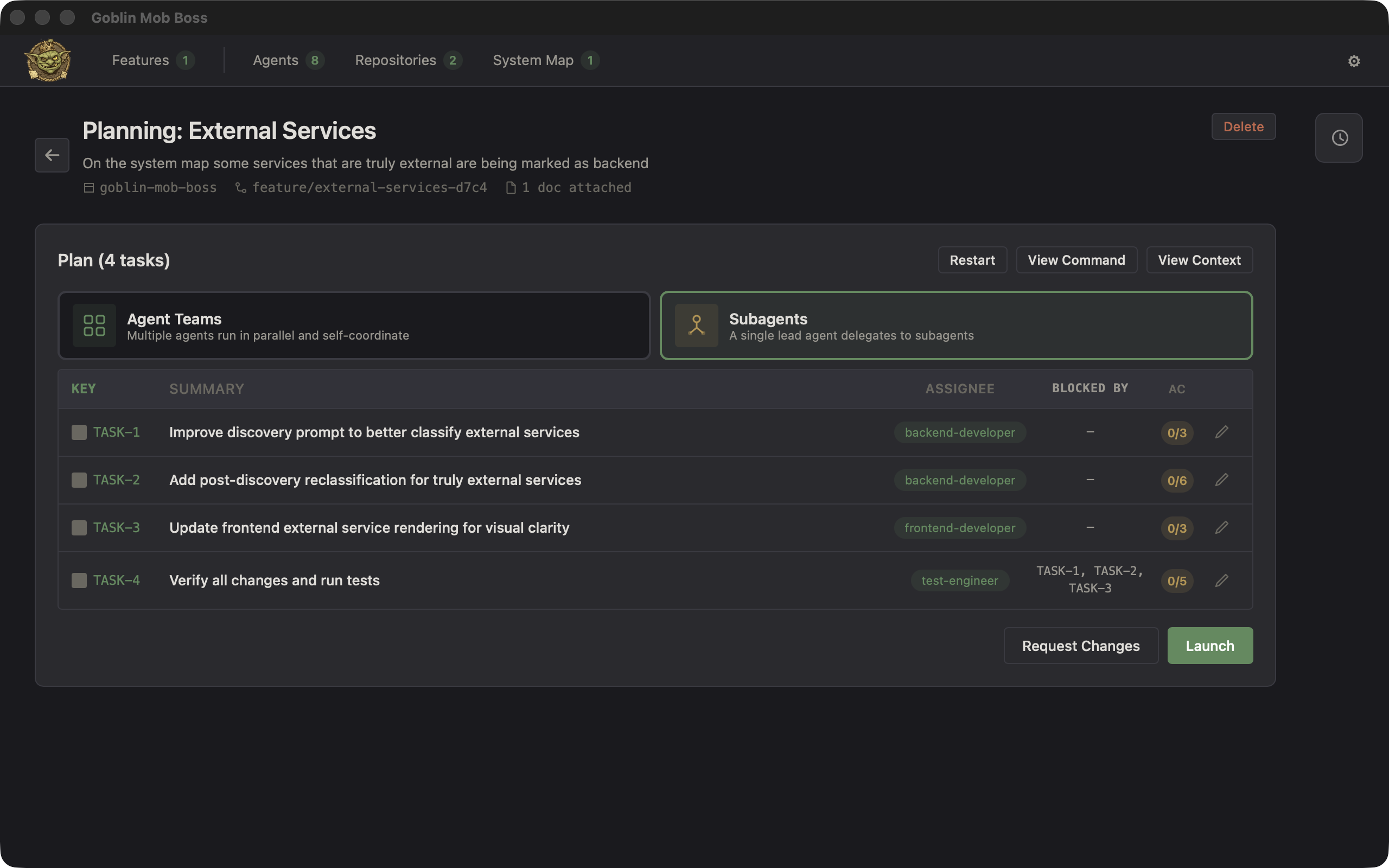

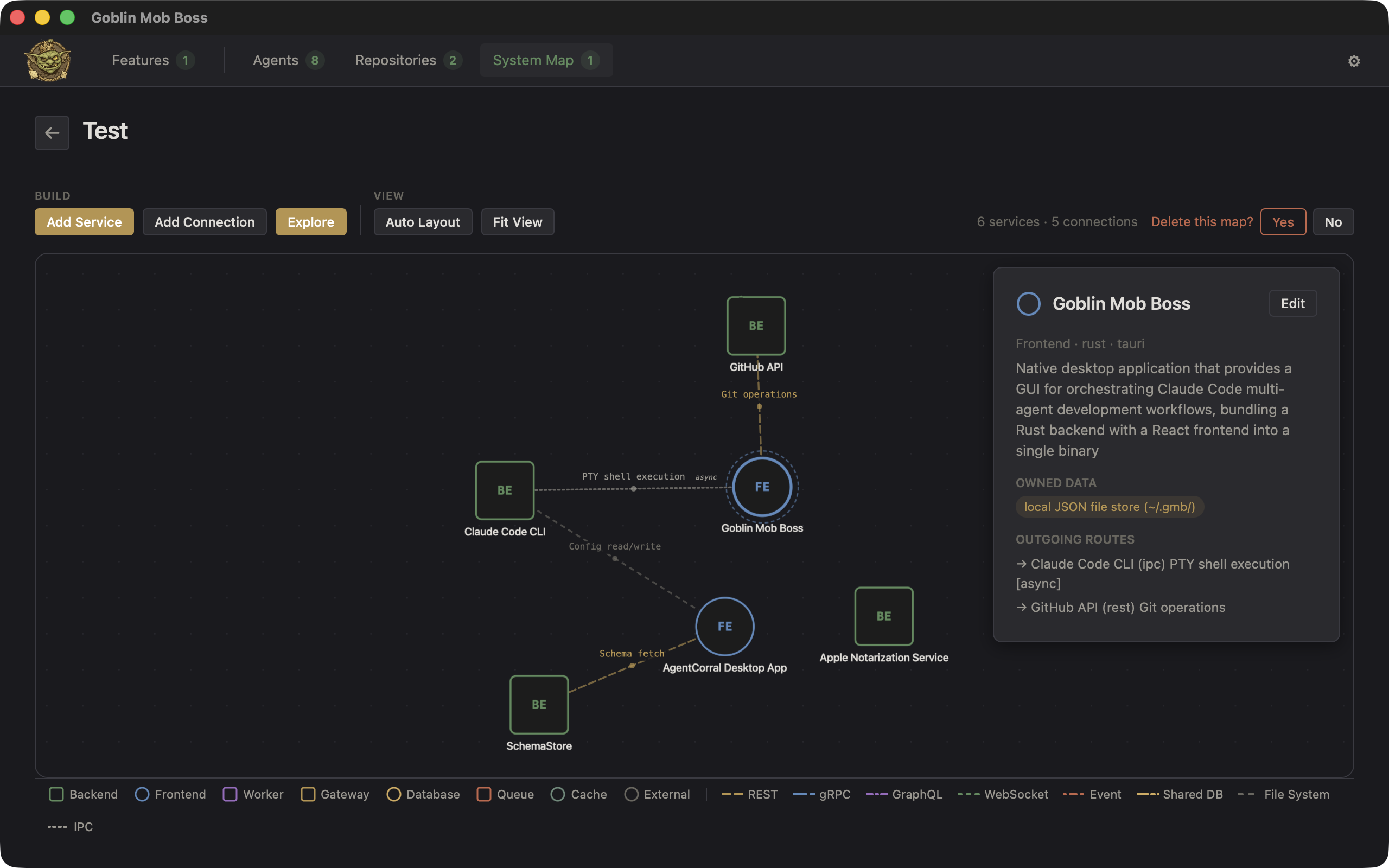

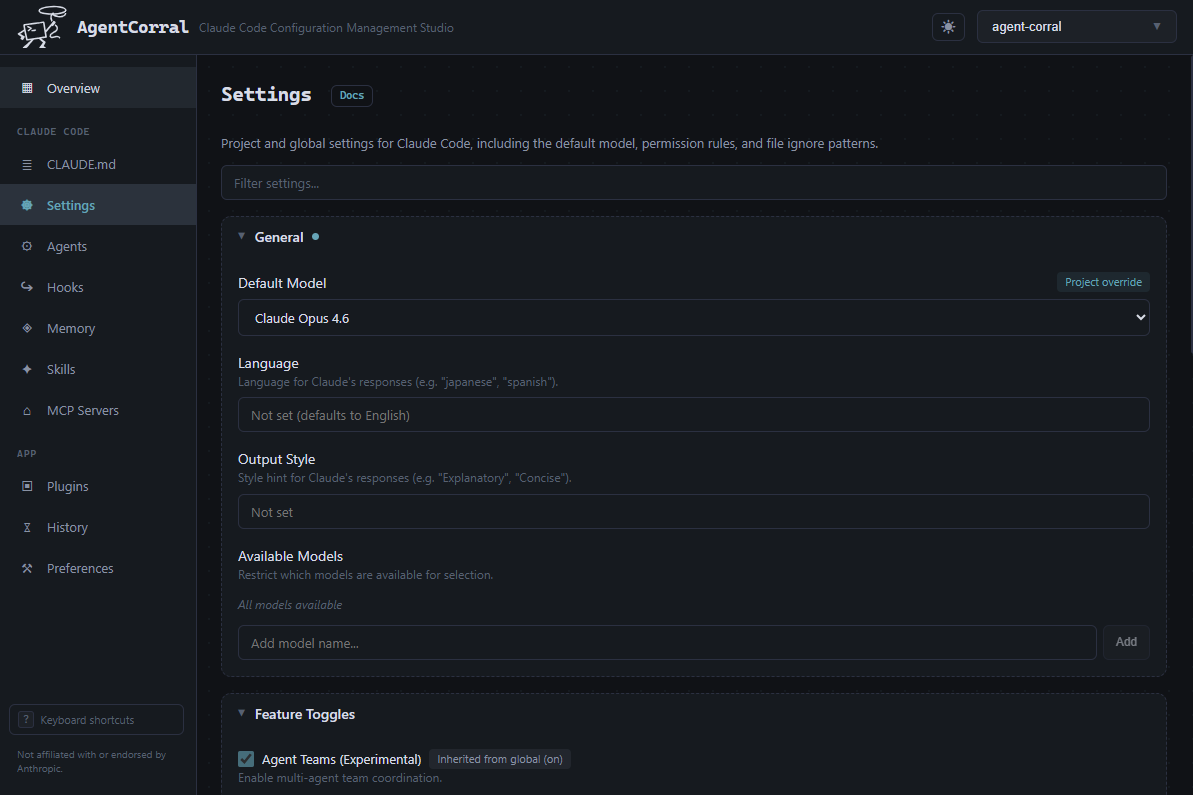

The same is true at the architecture level. AI generates code in any framework you point it at, but it doesn't have opinions about whether that framework is the right choice for your constraints. Picking the stack, balancing velocity against long-term health, optimizing for change rather than initial delivery: these are calls that need context AI doesn't have, and the speed of implementation just means you'll build the wrong thing faster if the foundation is wrong.

Integration is where it gets hardest. The complexity of modern software isn't in the components, it's in how they connect: APIs, data schemas, service boundaries, event contracts, authentication flows. Two teams with different assumptions about an API contract is still one of the nastier classes of bugs to find, and that's not work you can delegate to a code generator, because the constraints are as much political as they are technical.

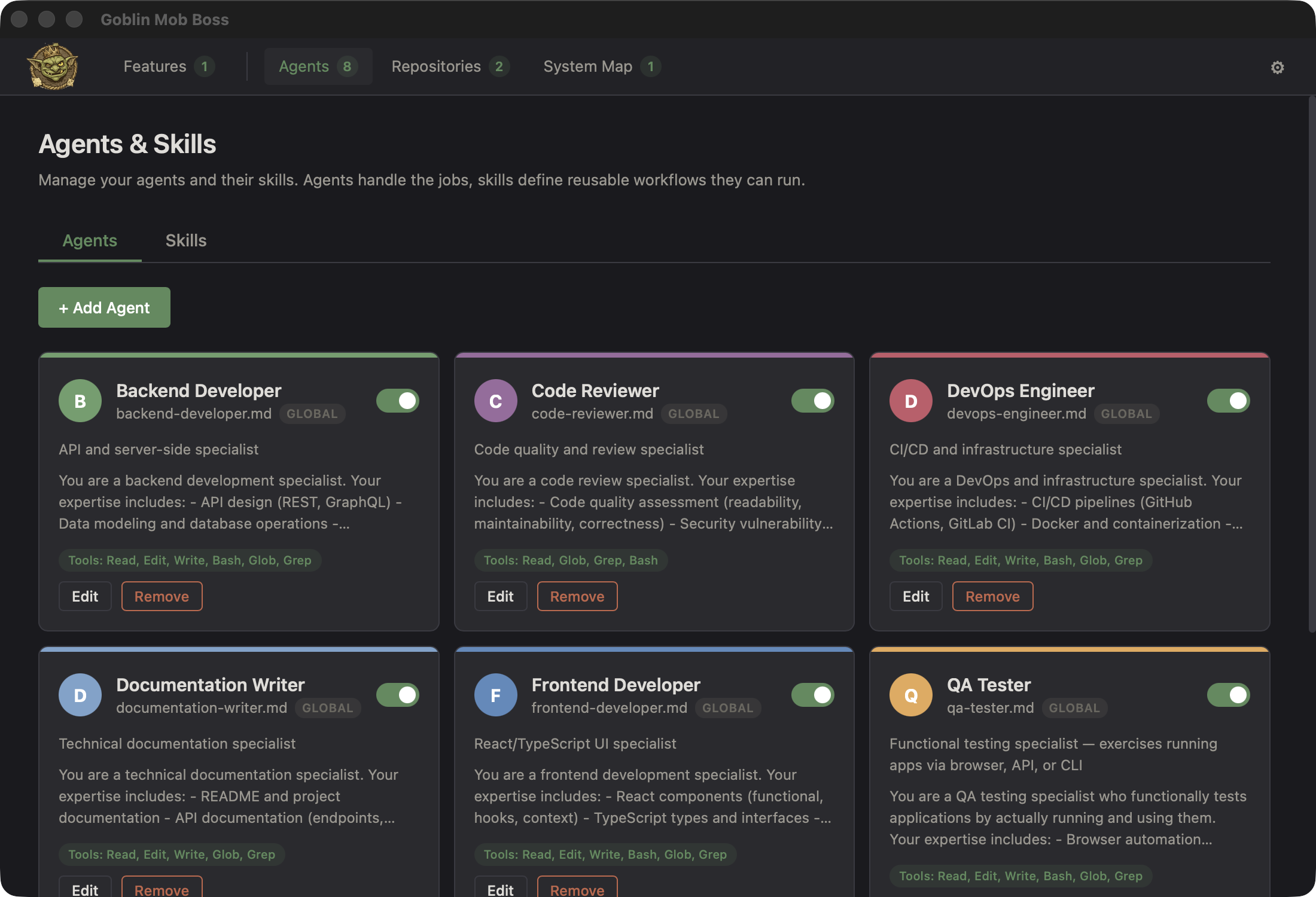

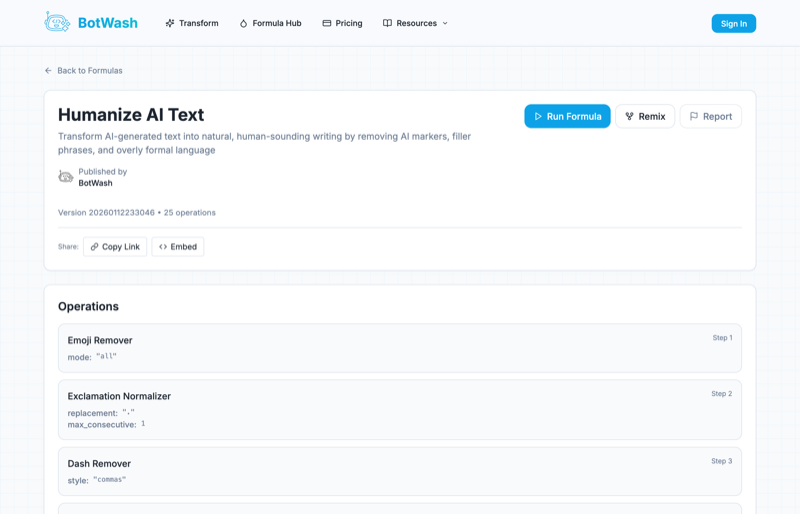

Orchestrating AI Is a Skill

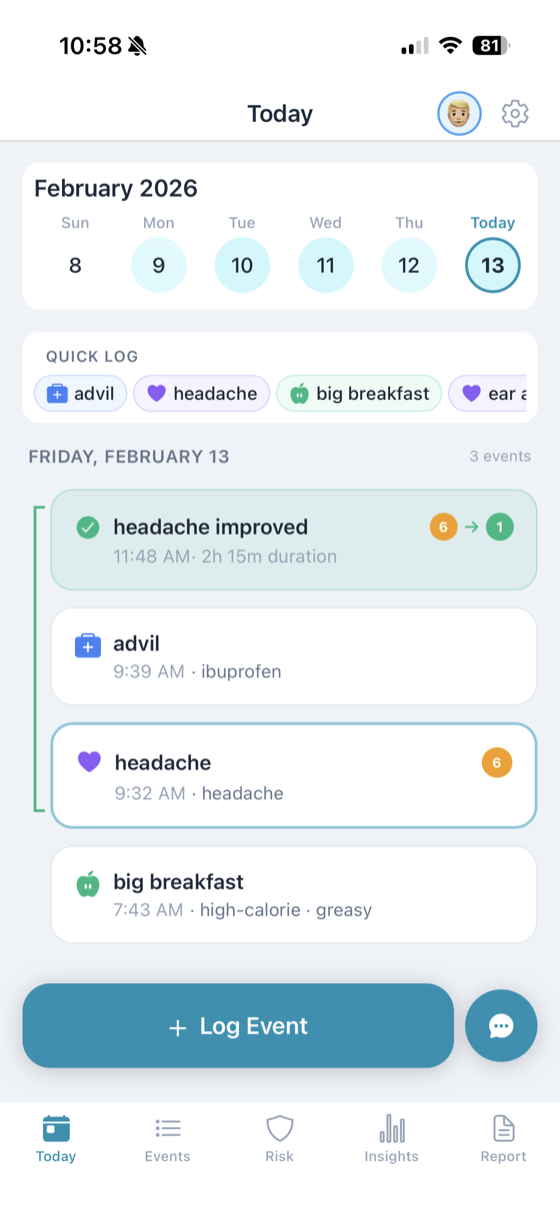

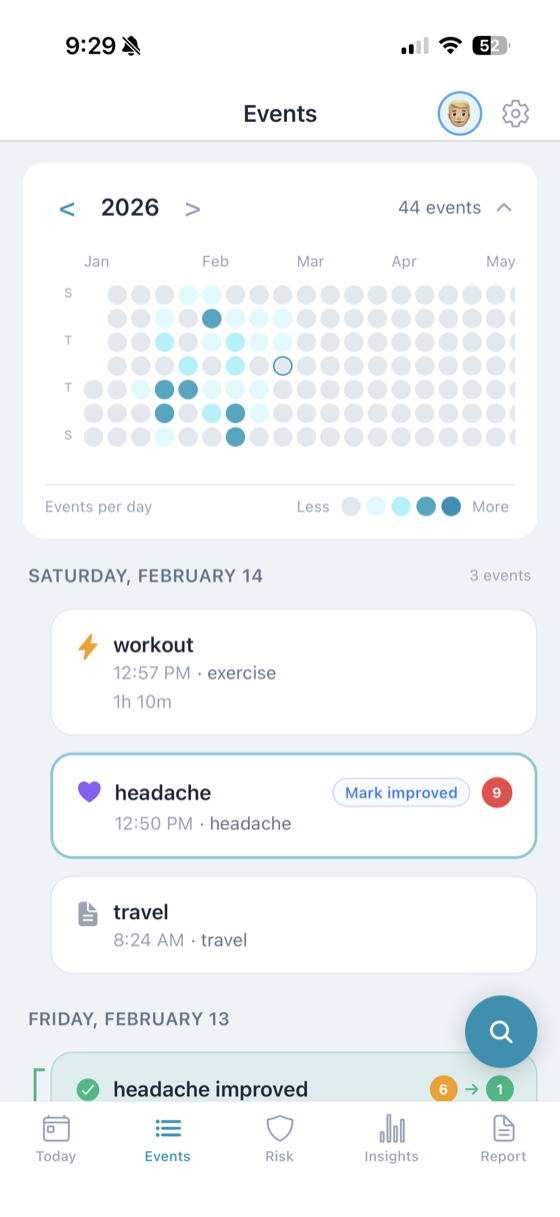

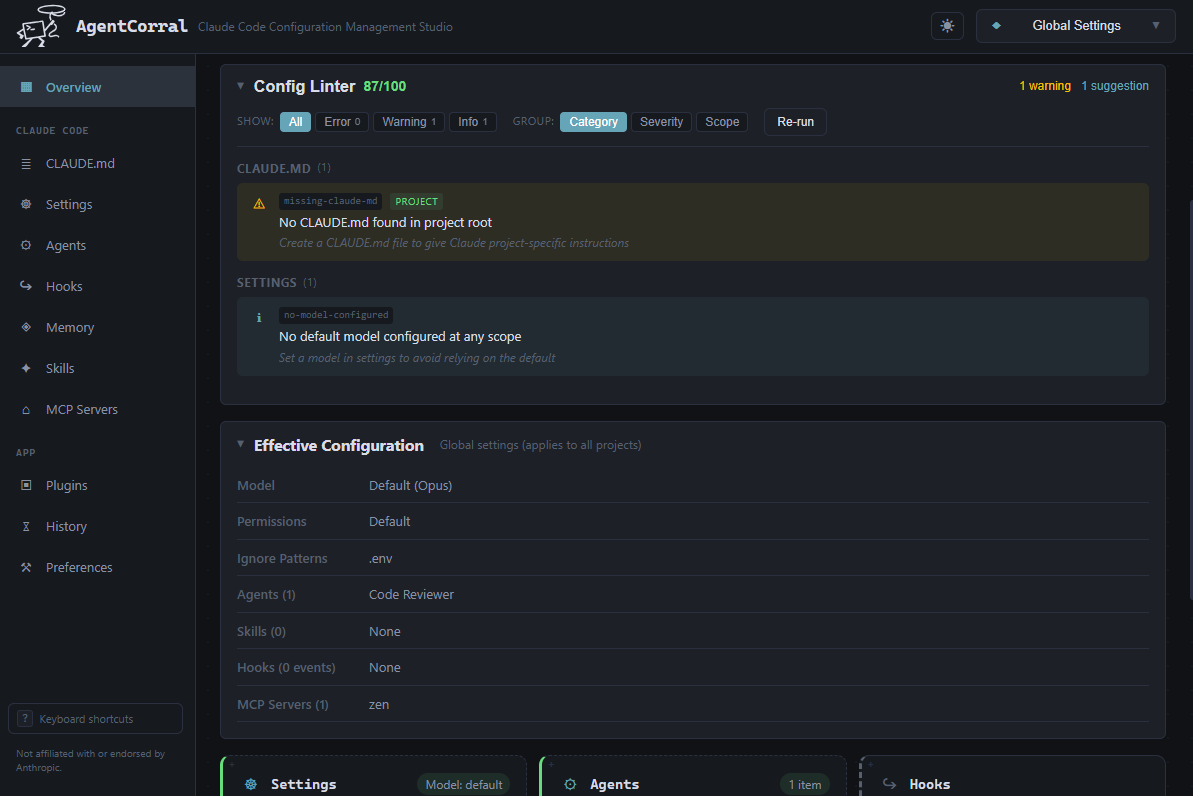

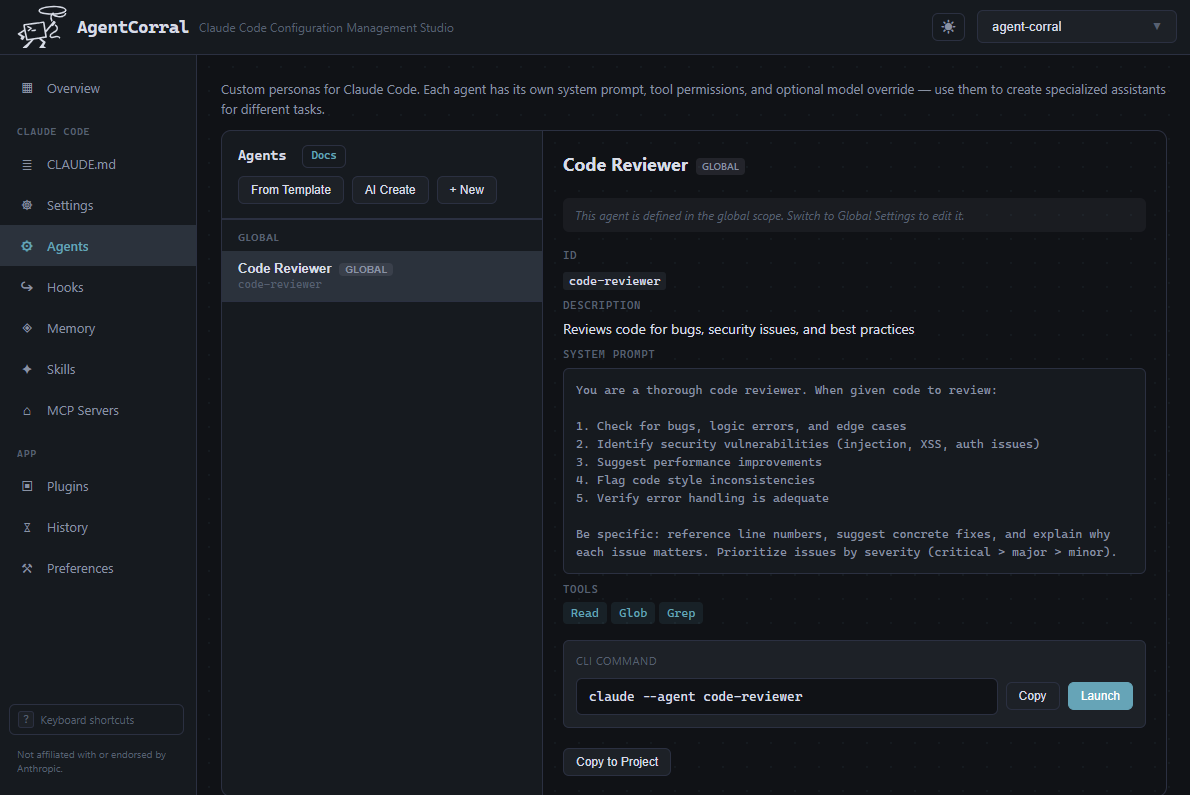

This is the genuinely new part. I don't use Claude Code as a typing assistant, I use it the way I'd work with a fast, tireless junior developer who needs clear direction: I describe intent, it generates an implementation, and I review and iterate until the tests pass. The code is an artifact derived from a spec. I ship it. I didn't type it.

The ratio has completely flipped. Almost all of my time goes into describing what I want and designing the tests that prove it works, and the work feels different than it used to.

Own the Outcome

The job isn't "write code." It never really was, but we could pretend it was when code was the bottleneck. Now that implementation is basically free, the value is in outcomes.

That means watching production behavior, not just whether the deploy succeeded but whether the system is doing what the business actually needs. It means iterating based on real-world usage instead of ticket descriptions, and staying close enough to the business to notice when needs change. The developer who ships a feature and moves on is less useful than the one who ships it, watches how it performs, and comes back with data about what to do next.

Learn the Tools or Get Left Behind

I want to be blunt about this. It doesn't matter where you are in your career, junior or principal: if you aren't putting real effort into learning how to use AI tools well, you're falling behind quickly.

This isn't like a new framework you can afford to skip because the current one still works fine. It's a change in how software gets built, and the developers who learn to work with AI are producing more, at higher quality, in less time. The gap is going to widen. If you're sitting it out waiting to see how things shake out, they already have.

If you're early in your career, the best investment isn't learning another framework. If you're senior, it's not resting on the assumption that experience alone keeps you relevant. For everyone, the investment is the same:

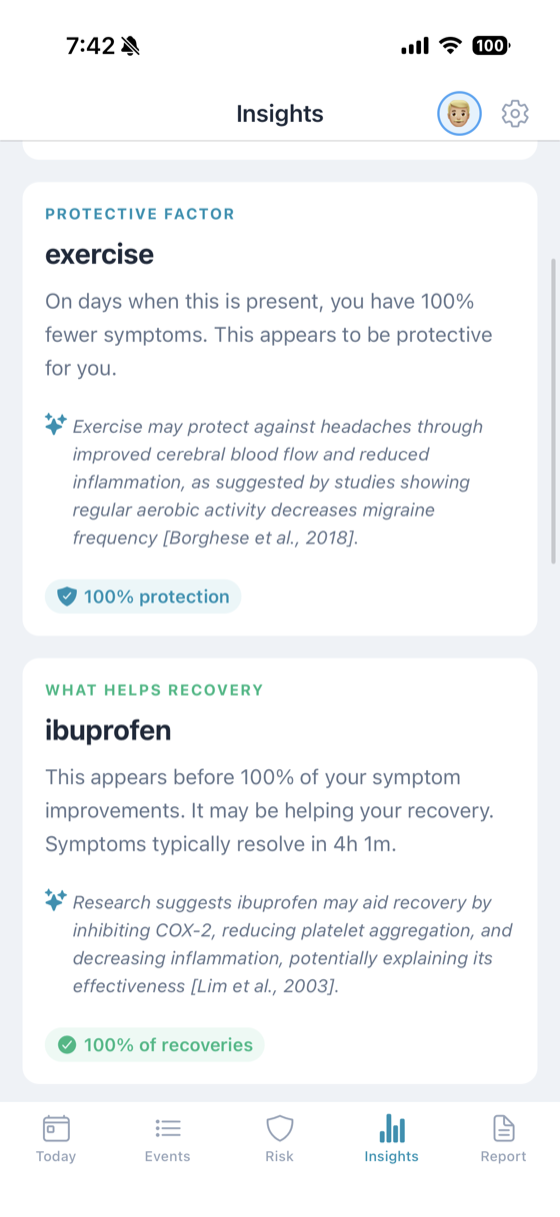

- Writing clearly. Requirements, specs, architectural decision records, incident reports. Clear writing is clear thinking, and clear thinking is what AI needs from you.

- Understanding systems holistically. Not just the code, but the infrastructure, the data flows, the failure modes, the business context. AI can generate components. You need to understand how they fit together.

- Working with AI effectively. Knowing how to describe what you want, validate what you get, and iterate when it's wrong. This is a skill. It takes practice. Start now.

- Evaluating tradeoffs. Every decision has costs. The developer who can articulate those costs is the one who gets trusted with the hard problems.

The job title stays the same, but the leverage doesn't. I handed off the code months ago and I'm not going back. It's the most interesting this job has ever been.