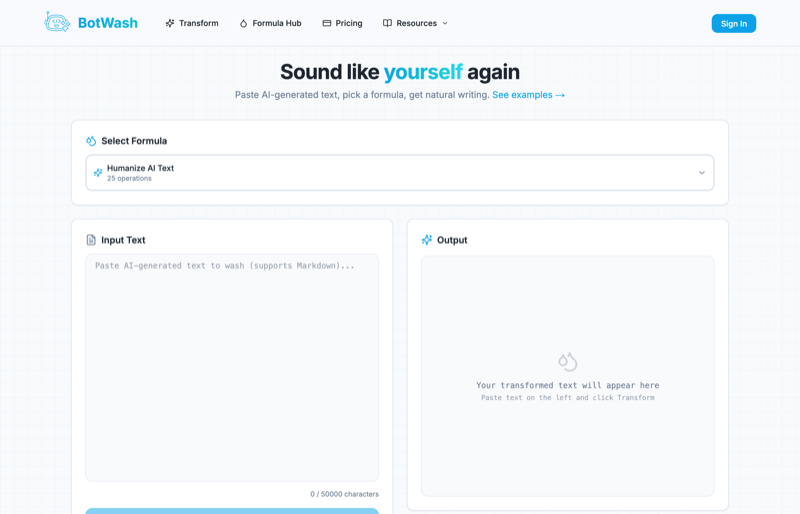

Botwash was, by all measurable aspects, a failed product. It never found product-market fit. Not because the idea was bad or the execution was off, but because the market changed before it even launched.

This isn't a complaint. I had a great time building it, I learned a lot, and this post is about what I took away from the experience.

The Premise

LLMs wrote like LLMs. You could spot AI-generated text immediately. The hedge-heavy openings, the five-paragraph essay structure, the word "delve" showing up in places no human would ever put it, and the em dashes. For a solid stretch of 2025, half the internet was posting screenshots of LLM output circling every em dash in red. It was the most reliable AI fingerprint there was.

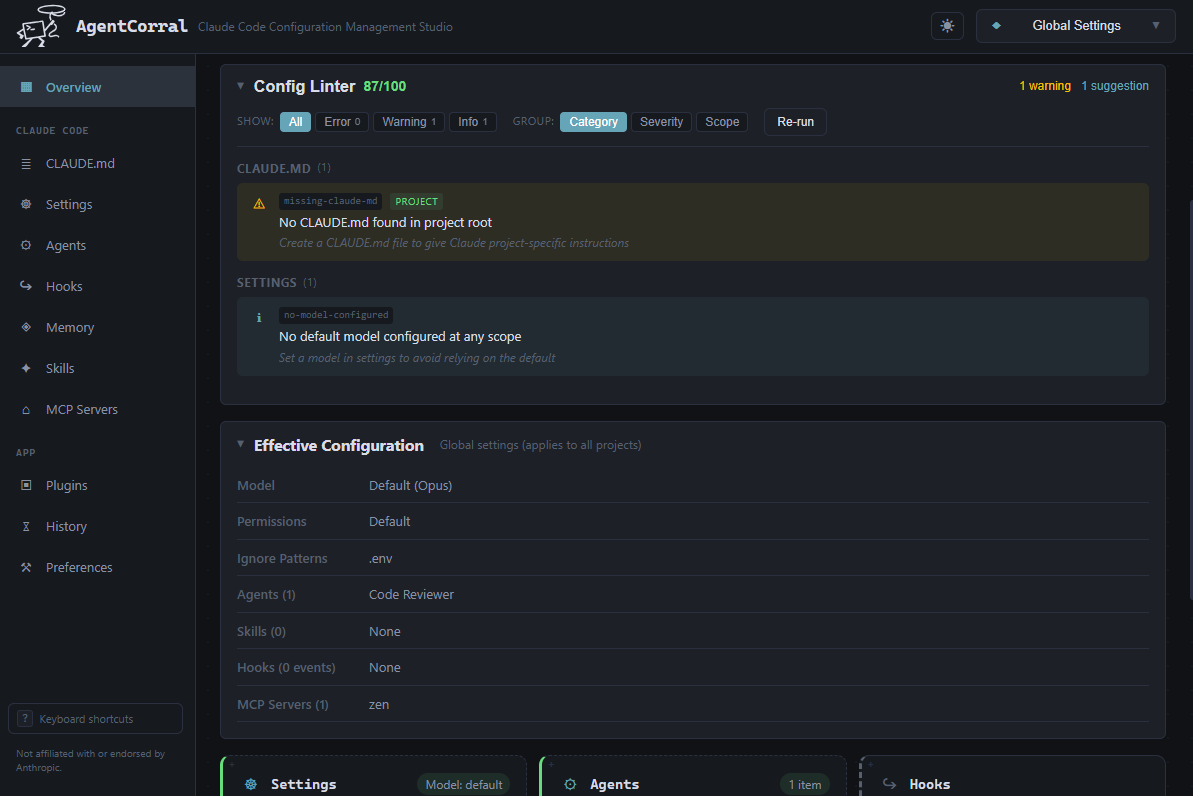

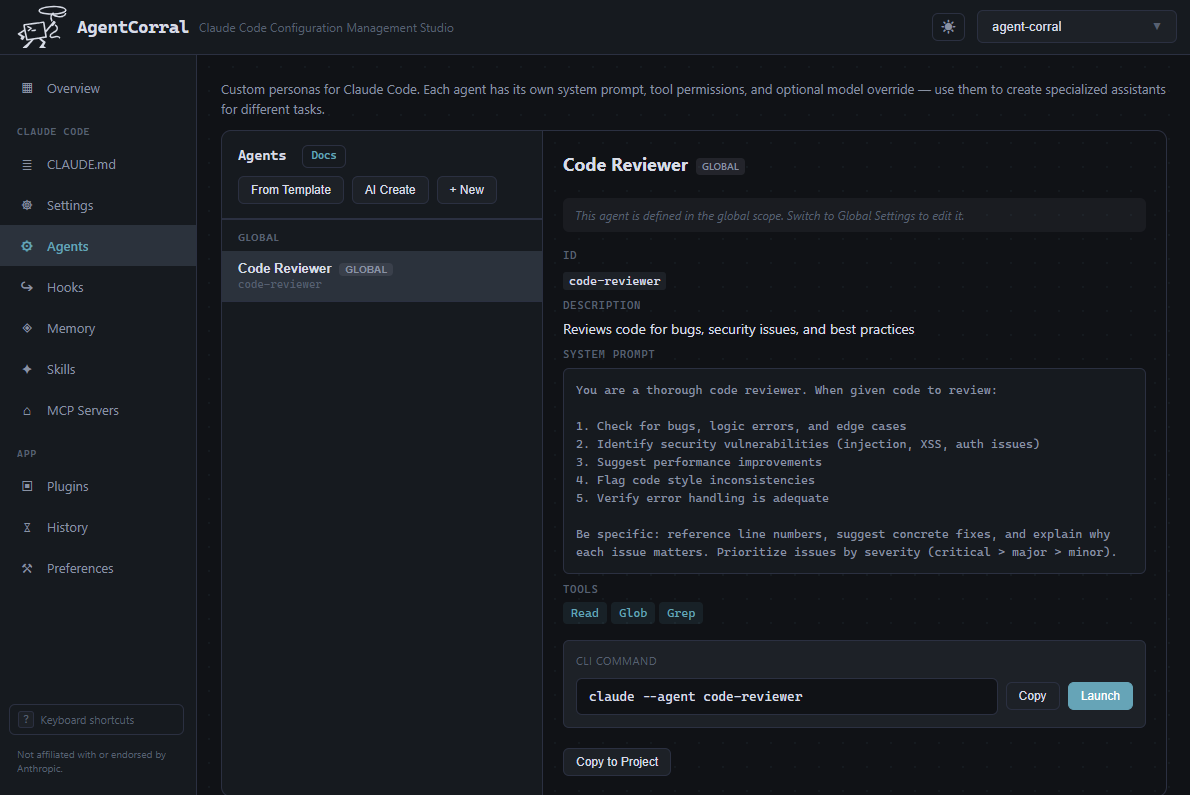

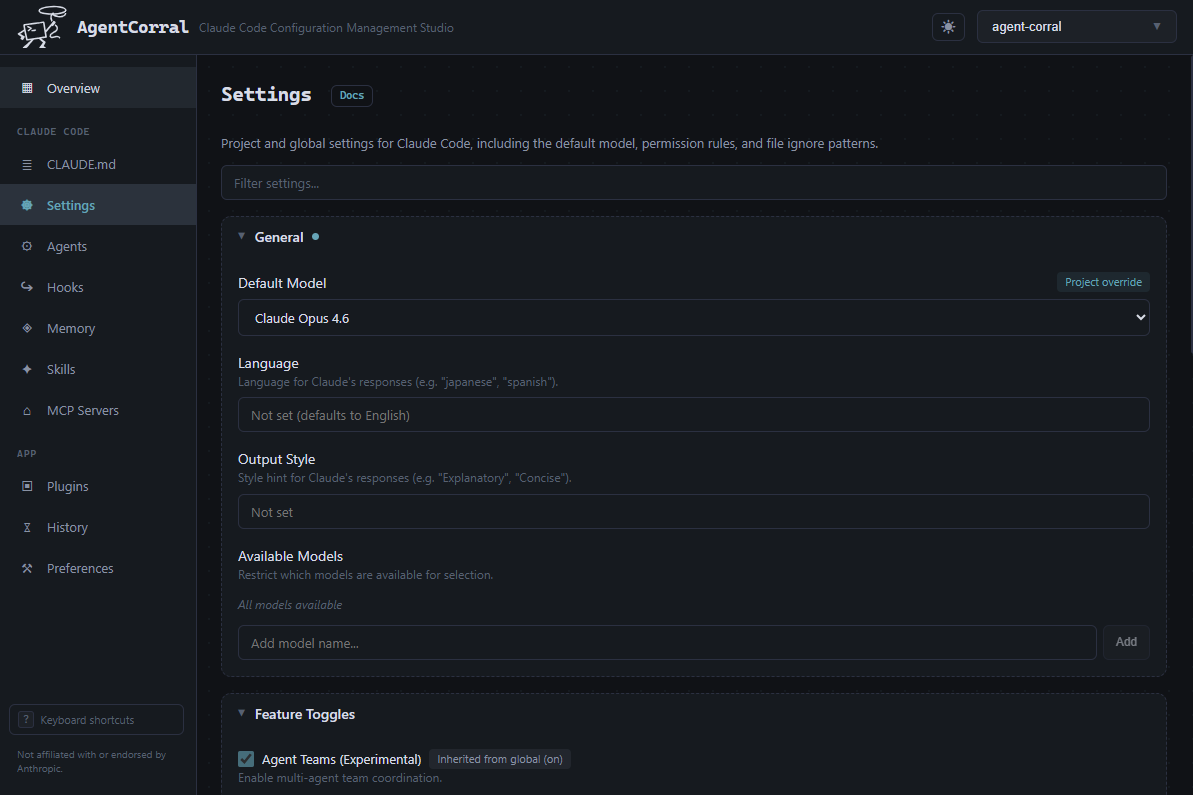

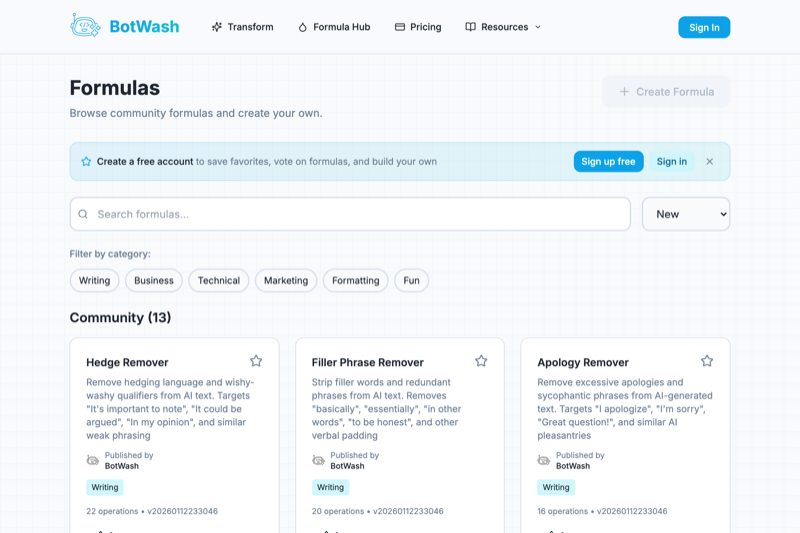

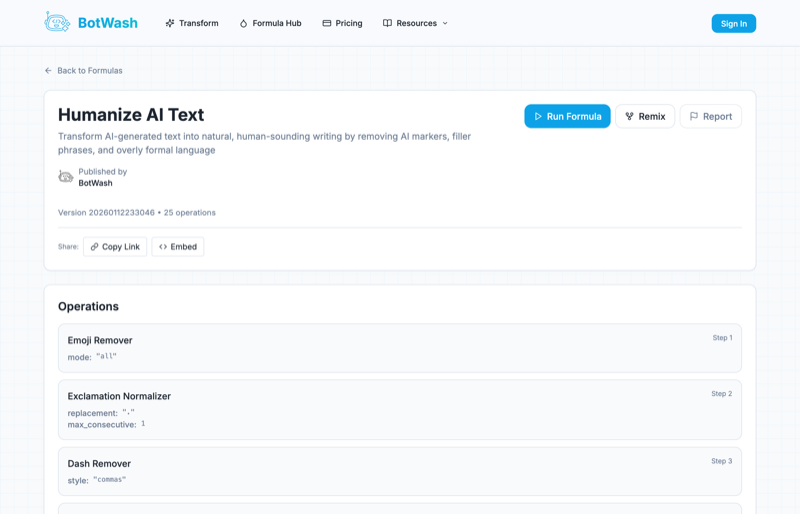

Botwash was a car wash for AI text. Paste in the robotic output, pick a community-made wash formula, and get something that actually sounded human. Users could build, share, and remix reusable transforms that restyled AI text with actual personality. The community aspect was the moat. The more people contributed formulas, the more useful the platform became.

What Happened

While I was building Botwash, the problem it was designed to solve was already disappearing. Toward the end of 2025, a wave of new model releases landed that were fundamentally more capable of following directions about tone, style, and voice. The em dashes quietly disappeared. The "delve" frequency dropped. The essay structure loosened up. It wasn't one model or one update. It was all of them, converging on the same fix at roughly the same time.

By the time Botwash actually launched, you could just tell the model how to write and it would listen. A system prompt with a few style notes got you most of the way to natural output. The problem had largely solved itself.

There was no user momentum to speak of. The people who would have needed Botwash most, content teams and anyone publishing AI-assisted text at scale, had already adapted. A well-crafted system prompt did 80% of what a wash formula could do. The remaining 20% wasn't worth a separate tool. Botwash launched into a market that had moved on.

The Lesson

If your product exists to patch a deficiency in another technology, you're betting that the deficiency will persist. That's a bet against the entire incentive structure of the companies building that technology. Not great odds.

A few things I'd do differently:

- Don't build painkillers for someone else's bug. If the problem you're solving is also on another company's roadmap, their fix ships as a free update. Yours ships as a separate subscription.

- Bet on workflows, not workarounds. Tools that sit between the user and the AI output are inherently fragile. Tools that give users new capabilities are stickier.

- Factor in the rate of change. I underestimated how fast LLMs were improving at the specific thing Botwash addressed. When building in AI-adjacent space, you need to account for the velocity of the underlying models, not just their current state.

- Ship faster or don't ship at all. If I'd launched six months earlier, Botwash might have caught the tail end of the window. Instead I was still polishing features while the market evaporated. In a space moving this fast, a finished product that's late is worse than a rough product that's on time.

What I Took Away

Building Botwash was a genuinely fun project. The formula engine, a configurable prompt-chaining pipeline with version control and community remix support, was a satisfying technical problem. I learned a lot about building for a community, even if that community never materialized at scale.

The failure gave me a sharper filter for evaluating product ideas in AI: is this solving a problem that the foundation model providers are actively trying to eliminate? If yes, the clock is already ticking.

Here's the thing though: with AI-assisted development, the barrier to entry for building products is the lowest it's ever been. The cost of a failed product is not what it used to be. Botwash didn't take years and a team of ten. It was a solo project built with the help of the same AI tools it was trying to fix. The time investment was real but manageable, and what I got back in lessons was worth more than the time I put in.

Botwash was a good product for a problem that no longer existed. The lesson isn't "don't build things." It's "know what you're betting on, and be honest about the odds." And if you're wrong, the cost of being wrong has never been lower. So build anyway.